To sum it upĪll in all, Text Deduplicator Plus is a straightforward application with the sole purpose of leaving one of each item inside a text document. The original file is not overwritten, and you can specify the save location and name before cleaning the document. All duplicate lines are removed at the press of a button, with a new file saved in the same location. There is an option to sort, but it doesn’t take the counter into consideration, and only arranges lines in an alphabetical order. Sadly, a lengthy document can be rather difficult to analyze this way, because counting only means adding the number of occurrences after each line, with no highlighter, or option to sort according to the number of duplicate items.

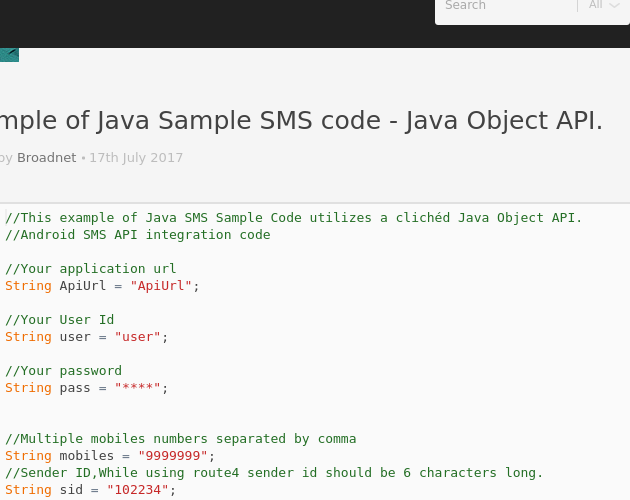

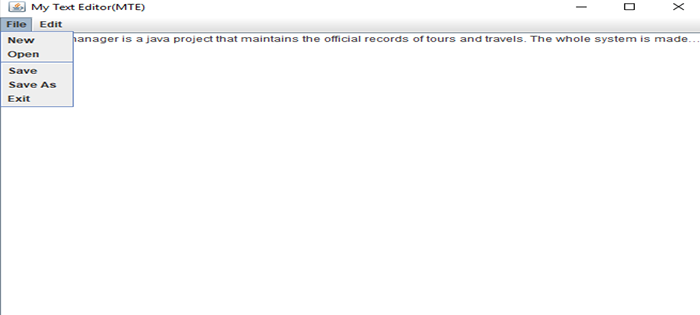

Duplicate counter, and automatic file saveīefore the cleansing begins, there’s the possibility to simply count duplicates. Text is shown right away, but isn’t editable to avoid making any mistakes. Adding one can’t be done by dragging it over the main window, so you need to rely on the built-in browse dialog for this to be possible. The application is capable of working with text, but it is read only from TXT and LOG files. On the other hand, this can be done from a USB flash drive on other computers as well, without affecting stability of the target PC. Launching it after download is all it takes to bring up the main window and start cleaning text documents. On the one hand, the application comes in a pretty lightweight package, and requires no installation in order to function. In this regard, Text Deduplicator Plus helps out clean documents of duplicate lines with little effort on your behalf. If (article.text().matches("^.*?(Java 8|java 8|JAVA 8).Creating large lists and updating them for a long period of time involves the risk of creating duplicates, that’s if little attention is paid, or multiple individuals add new lines without checking first. Only retrieve the titles of the articles that contain Java 8 Connect to each link saved in the article and find all the articles in the pageĮlements articleLinks = lect("h2 a") Remove the comment from the line below if you want to see it running on your editor Find all URLs that start with "" and add them to the HashSetĮlements otherLinks = lect("a") Parse the HTML to extract links to other URLsĮlements linksOnPage = lect("a") (we are intentionally not checking for duplicate content in this example)ĭocument document = nnect(URL).get() Check if you have already crawled the URLs And finally, because this article intends to inform as well as provide a viable example. Also, because to build a Web Scraper you need a crawl agent too.

Why is the article called ‘Basic Web Crawler’ then? Well… Because it’s catchy… Really! Few people know the difference between crawlers and scrapers so we all tend to use the word “crawling” for everything, even for offline data scraping. If you are reading this article, chances are you are not looking for a guide to create a Web Crawler but a Web Scraper. Truth be told, developing and maintaining one Web Crawler across all pages on the internet is… Difficult if not impossible, considering that there are over 1 billion websites online right now.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed